NVIDIA offers a variety of GPUs and graphics cards, all of which have unique naming patterns. We are all familiar with and adore the gaming and consumer range of graphic cards by GeForce.

The graphics technology defines by the prefixes GTX or RTX, where RTX first made use of Real-Time Ray Tracing. The number on the card indicates where it falls within that generation of graphics cards. Ti cards are more potent than non-Ti cards of the same model number. This Article explains Nvidia ti vs non ti.

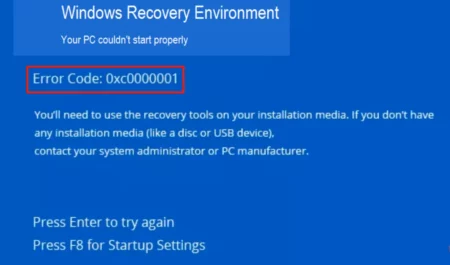

What Does Ti Stand for in GPU?

Ti stands for Titanium, the graphics card brand Nvidia. The Ti badge, a component of Nvidia’s naming scheme for their GPUs, indicates that a particular graphics card is an enhanced or better performing model than its standard, or non-Ti, predecessor.

The GeForce2 Ti 200, GeForce3 Ti 200, and GeForce Ti 500, all introduced in the fall of 2001, are some of the earliest examples of this naming trend. The GeForce 2 Ti 200 was at the time considered a less expensive alternative to the GeForce 3 Ti 200 and GeForce 3 Ti 500 cards.

What is the Difference Between Ti and Normal GPU?

If you want to know Ti Graphics Card vs Non-Ti (Base Model) differences, keep reading! Non-Ti GPUs typically cost less than Ti GPUs if the price is your main priority when buying a new graphics card. If money is not an issue and you are an avid gamer, a Ti GPU is essential. Yet, a lower-end Ti GPU or a mid-range or higher-end non-Ti GPU will be the ideal choice for a mid-range PC for a gamer.

However, if you have the money and performance is essential to you, you should consider getting a Ti GPU. It’s always beneficial to have more performance, and these cards are frequently practically as fast as the model that comes after them while being much less expensive, as is the case with the RTX 3080 Ti and 3090.

What is the Ti in GTX?

Low to mid-range Ti cards are viewed as a stepping stone to the next level. The medium ground will cost you less to upgrade to and give you greater FPS. At the top end, though, Ti GPUs are the most potent graphics cards currently available and will set you back the same amount as a top-tier gaming System only for the card.

A Ti GPU will be the best investment you have ever made if money is no object, and you are an avid PC gamer. The cheaper Ti cards are a more practical choice for those who build our computers on a tight budget. Whatever you decide, remember that having a Ti card on your side will inevitably result in greater FPS.

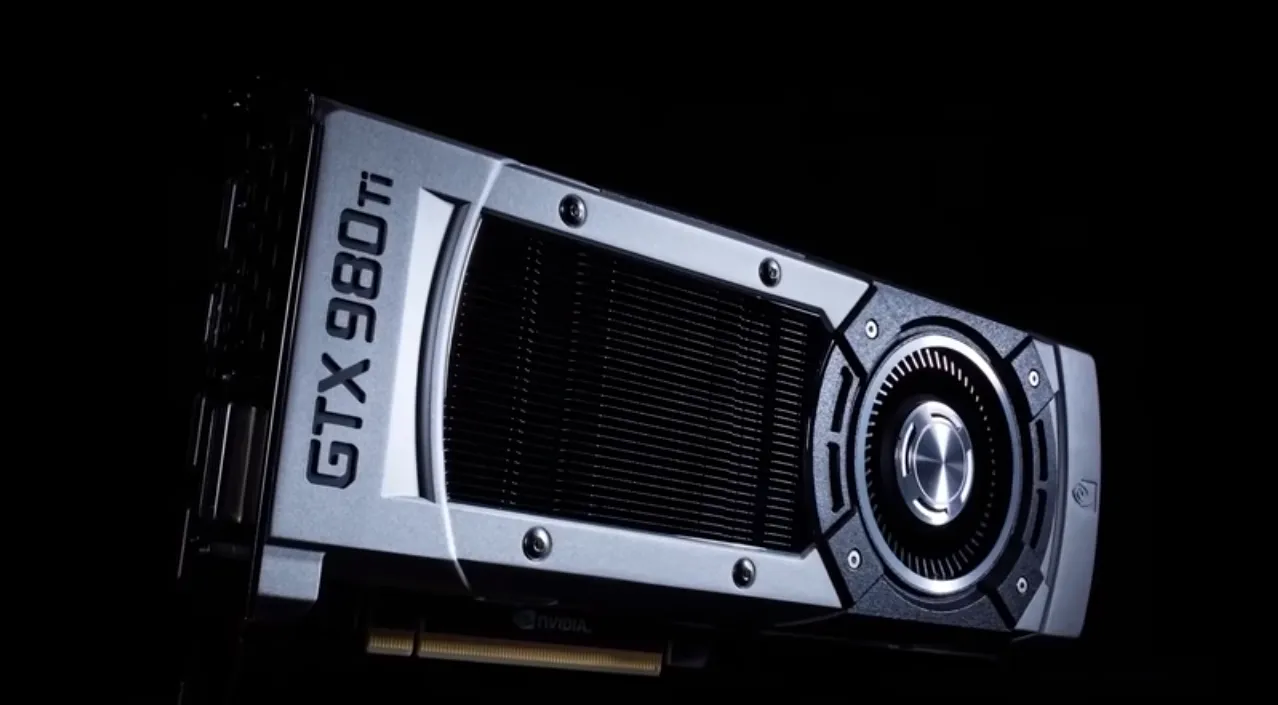

Which Ti Graphics Card is the Best?

The Nvidia RTX 3090 and AMD RX 6900 XT are the only graphics cards that can truly compete with the RTX 3080 Ti in terms of power. The RTX 2080 Ti dominated the roost for two years before surpassed by the RTX 3000 range in the last generation of Nvidia GPUs.

Nvidia Ti Vs Super

Here is the detailed information about Nvidia Ti Vs Super:

Nvidia Ti

The same general guidelines apply when Nvidia utilizes the Ti to designate a more performant product. The XT-branded device can have completely different clock rates, higher power requirements, and a different GPU specification.

Additionally, AMD updated the Radeon 6000 Series with the 6X50 update, which largely coupled faster VRAM with the current portfolio. Look at the benchmarks; you should be familiar with the exercise now.

Super Ti

A well-received update to compete with AMD’s Radeon 5000 Series in 2019 was the Nvidia Super series. In essence, Nvidia upgraded the GPU in each tier of graphics cards in exchange for a small price premium. The RTX 2060 Super has an RTX 2070 GPU that is less powerful, and so on.

The Ti branding could have distinguished the RTX 3080 10GB and RTX 3080 12GB. Their specification differences are like those of the RTX 3070 Ti. Since there was already an RTX 3080 Ti, which essentially had an RTX 3090 GPU, Nvidia made it way too difficult.

Several people were unhappy because it made purchasing the RTX 30 Series way too difficult. When Nvidia attempted to do the same with the RTX 40 Series, customer opinion actually declined. The RTX 4080 12GB and 16GB have considerably varied performance characteristics. Hence it was incorrect to name them similarly.

What is CUDA Core?

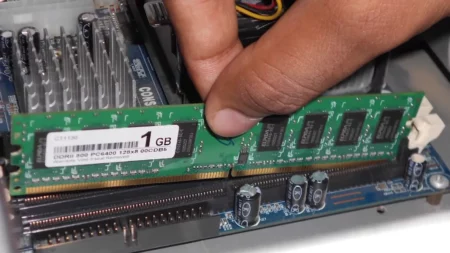

The Compute Unified Device Architecture (CUDA) Cores on the Nvidia GPU are comparable to CPU cores. They can do numerous calculations simultaneously, which is important while playing a graphically intensive game.

One CUDA core is quite comparable to one CPU core. A typical gaming Processor can have up to 16 cores. However, CUDA Cores can easily reach the hundreds. CUDA Cores are often less developed but implemented in much bigger quantities.

The objective of high-end CUDA Cores, which can number in the thousands, is efficient and quick parallel computing because more CUDA Cores allow for the parallel processing of more data. Moreover, CUDA Cores are only available on Nvidia GPUs, starting with the G8X series, which includes the GeForce, Quadro, and Tesla product lines. Most operating systems are compatible with one another.

Furthermore, AMD’s Stream Processors provide you with other options beyond CUDA Cores from Nvidia. While CUDA and Stream Processors have different architectures and designs, they do the same task, and both technologies offer much better performance and visual quality.

Which is Preferable, 3070 TI or 3080?

Compared to the 3070 Ti, the 3080 features greater memory and a faster clock speed. The 3080 is hence quicker than the 3070 Ti. The 3070 Ti is a good choice for gamers who want a top-tier graphics card but don’t want to spend as much as they would on a 3080. For gamers who demand the highest level of performance, the 3080 is preferable.

If you intend to use all your settings in 4K gaming, the 3080 is a solid performance and is worth purchasing. Despite this, users who want to use their PC for tasks that require greater processing power, such as CAD or 3D rendering, should still give the 3080Ti considerable thought.

If you wish to use the 3080 at this resolution, a super-powerful monitor with at least 140Hz is necessary; otherwise, it would be excessive. The 3080 GPU can easily achieve over 100FPS in even the most taxing games when used in this range.

Members of GeForce NOW RTX 3080 will be the only ones with access to the new servers. Which will stream content at up to 1440p at 120 frames per second (FPS) on PC.

CUDA Cores are parallel processors, and Nvidia GPUs can have hundreds or thousands of them. The cores oversee processing all data that enters and exits the GPU and working on game graphics calculations that the user sees.